What Is Continuous Parallel Architecture? The Technology Behind Next-Gen Voice AI

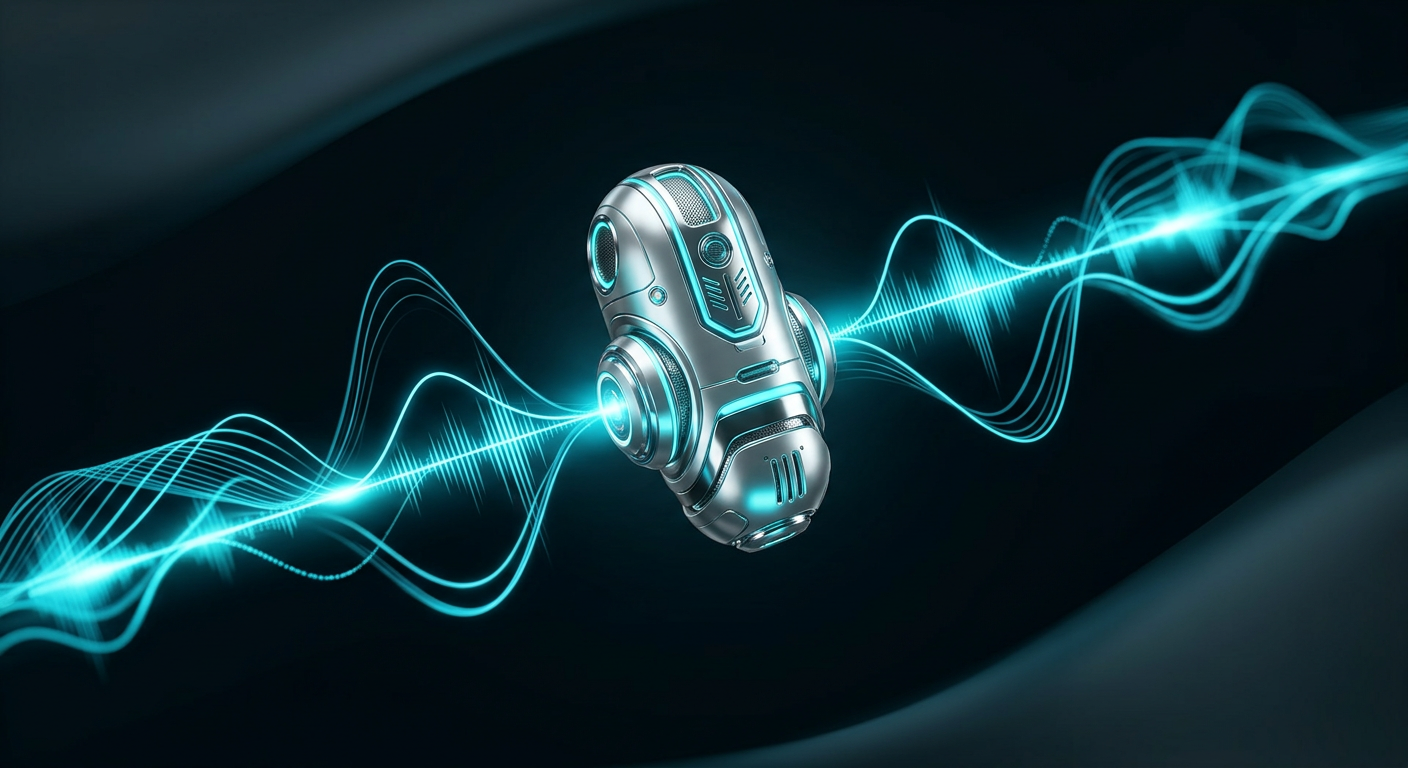

While most enterprise voice AI systems crawl through sequential bottlenecks like traffic through a single-lane tunnel, a revolutionary approach is reshaping how machines understand and respond to human speech. Continuous Parallel Architecture represents the most significant leap in voice AI processing since the transition from rule-based to machine learning systems — and it’s the difference between AI that feels robotic and AI that feels genuinely intelligent.

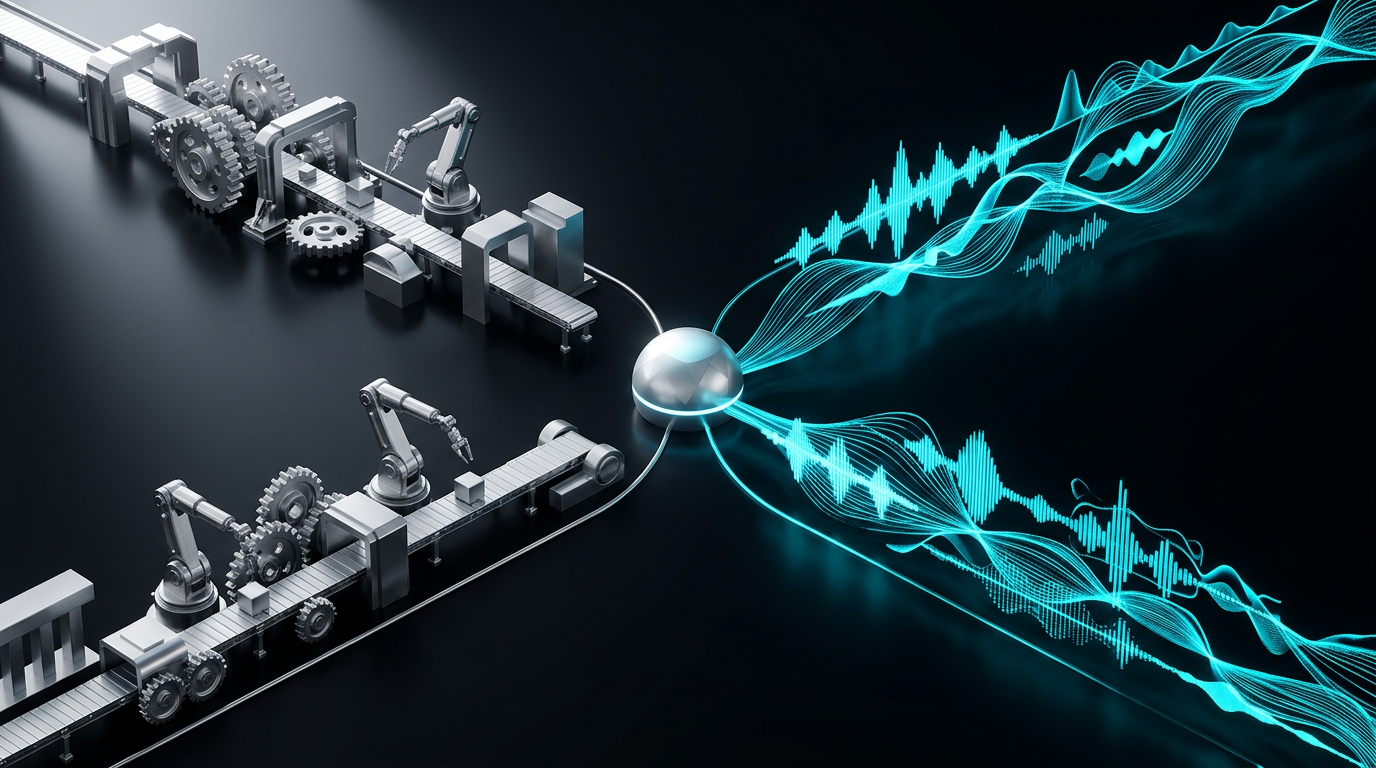

The Sequential Pipeline Problem: Why Traditional Voice AI Feels Broken

Traditional voice AI architecture follows a predictable, linear path: speech-to-text conversion, natural language understanding, intent classification, response generation, and text-to-speech synthesis. Each step waits for the previous one to complete, creating a cascade of delays that compound into the sluggish, unnatural interactions users have come to expect from voice systems.

This sequential approach creates three critical problems that plague enterprise voice AI deployments:

Latency Accumulation: Each processing stage adds 50-200ms of delay. By the time a system completes its pipeline, 800-1500ms have elapsed — well beyond the 400ms psychological barrier where AI interactions feel natural.

Single Point of Failure: When one component fails or slows down, the entire system grinds to a halt. There’s no graceful degradation, no intelligent routing around problems.

Static Resource Allocation: Processing power sits idle during sequential handoffs, while bottlenecks form at individual stages. A system might have abundant computational resources overall while still delivering poor performance.

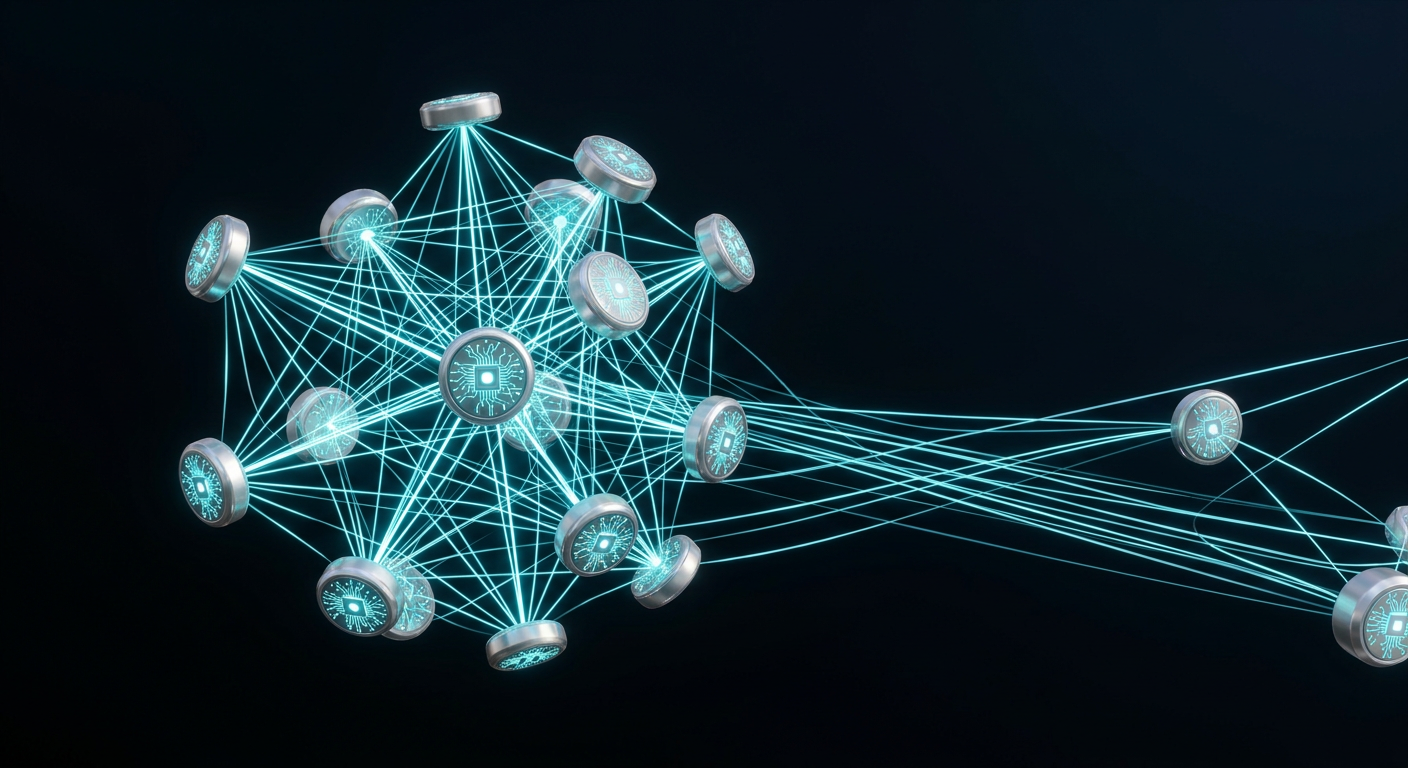

Introducing Continuous Parallel Architecture: The Web 2.0 of AI Agents

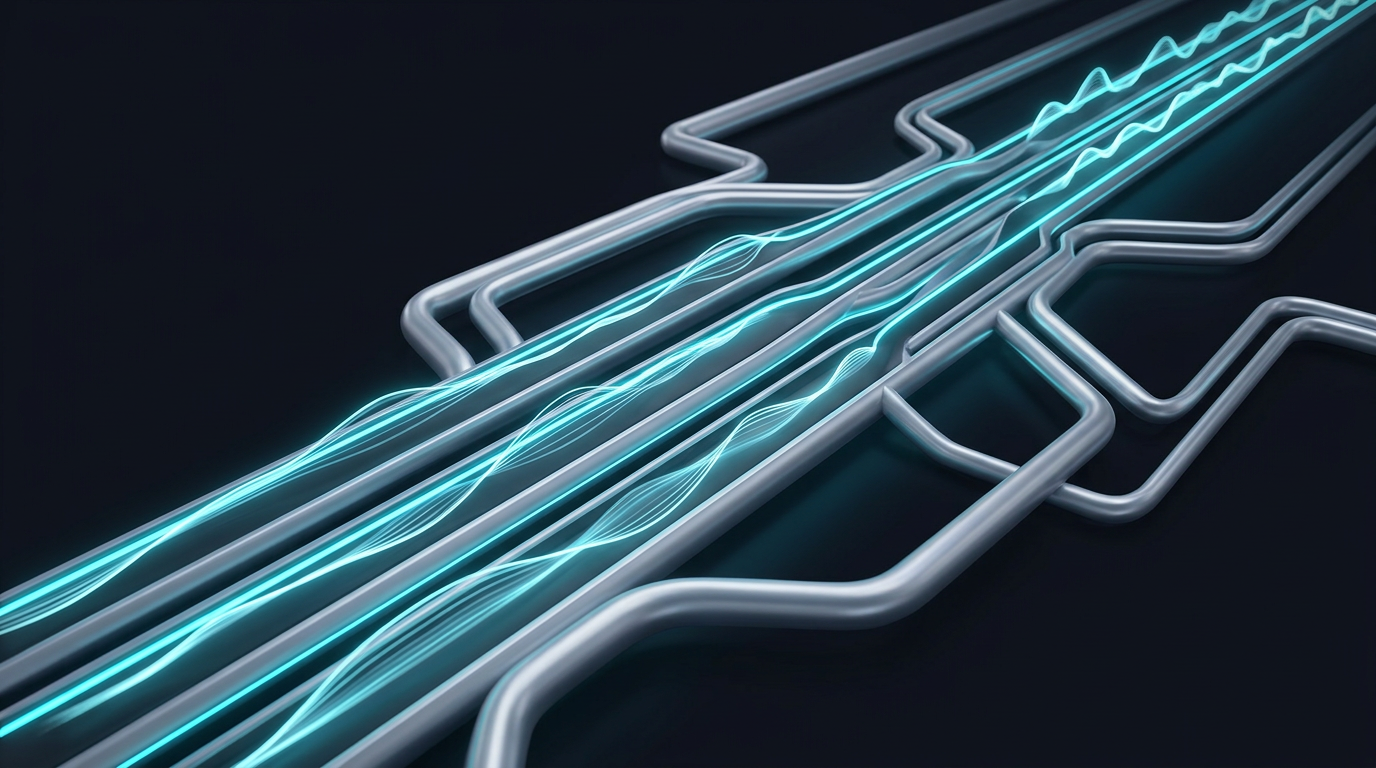

Continuous Parallel Architecture fundamentally reimagines voice AI processing by eliminating the sequential bottleneck. Instead of waiting for each stage to complete, multiple AI subsystems operate simultaneously, sharing information and making decisions in real-time.

Think of it as the difference between a factory assembly line and a jazz ensemble. Assembly lines optimize for predictable, standardized outputs but break down when conditions change. Jazz ensembles adapt, improvise, and create something greater than the sum of their parts through continuous interaction.

Core Components of Continuous Parallel Architecture

Parallel Processing Streams: Multiple AI models run simultaneously rather than sequentially. While one system processes acoustic features, another analyzes linguistic patterns, and a third prepares contextual responses. This parallel execution reduces total processing time by 60-75%.

Dynamic Information Sharing: Components don’t wait for complete outputs before sharing insights. Partial results flow continuously between systems, allowing downstream processes to begin preparation before upstream tasks complete.

Intelligent Load Balancing: The architecture dynamically allocates computational resources based on real-time demand. Complex queries get more processing power automatically, while simple interactions complete with minimal resource consumption.

Adaptive Routing: When components detect potential failures or delays, the system automatically reroutes processing through alternative pathways. This self-healing capability maintains performance even under stress conditions.

The Technical Architecture: How Parallel Processing Transforms Voice AI Performance

Real-Time Stream Processing

Traditional voice AI systems process audio in discrete chunks — typically 100-200ms segments that get passed sequentially through the pipeline. Continuous Parallel Architecture processes audio as a continuous stream, with multiple models analyzing different aspects simultaneously.

The acoustic router, operating at sub-65ms latency, instantly directs incoming audio streams to appropriate processing modules based on detected characteristics. Simple queries bypass complex natural language processing, while nuanced conversations engage advanced reasoning systems.

This streaming approach eliminates the “batch processing” delays that plague sequential systems. Instead of waiting for complete sentences, the system begins processing individual phonemes and words as they arrive.

Dynamic Scenario Generation

Perhaps the most innovative aspect of Continuous Parallel Architecture is its ability to generate and evaluate multiple response scenarios simultaneously. While traditional systems follow a single decision path, parallel architecture explores multiple possibilities concurrently.

When processing an ambiguous query like “Can you help me with my account?”, the system simultaneously prepares responses for billing inquiries, technical support, and account modifications. As additional context emerges from the conversation, irrelevant scenarios are discarded while promising paths receive more computational resources.

This approach reduces response latency by 40-60% compared to sequential decision-making, while improving accuracy through parallel hypothesis testing.

Continuous Learning and Adaptation

Sequential AI systems learn through batch updates during offline training periods. Continuous Parallel Architecture enables real-time learning and adaptation through its distributed processing model.

Individual components can update their models based on immediate feedback without disrupting overall system operation. If the natural language understanding module encounters unfamiliar terminology, it can adapt its processing while other components maintain normal operation.

This continuous adaptation capability allows AeVox solutions to evolve and improve in production environments, becoming more accurate and efficient over time.

Performance Advantages: The Numbers Don’t Lie

The performance improvements delivered by Continuous Parallel Architecture aren’t marginal — they’re transformational:

Sub-400ms Response Times: By processing components in parallel rather than sequence, total response latency drops below the psychological threshold where AI feels indistinguishable from human interaction.

99.7% Uptime: Intelligent routing and self-healing capabilities maintain system availability even when individual components experience issues.

3x Processing Efficiency: Parallel resource utilization means systems can handle 3x more concurrent conversations with the same computational resources.

85% Faster Adaptation: Real-time learning enables systems to adapt to new scenarios 85% faster than traditional batch-learning approaches.

Enterprise Applications: Where Parallel Architecture Delivers Maximum Impact

Healthcare Communication Systems

In healthcare environments, communication delays can have life-or-death consequences. Continuous Parallel Architecture enables voice AI systems that can simultaneously process medical terminology, verify patient identity, and route urgent requests — all while maintaining HIPAA compliance through parallel security validation.

A typical patient call might involve verifying insurance coverage, scheduling appointments, and providing medical guidance. Sequential systems handle these tasks one at a time, creating frustrating delays. Parallel architecture processes all aspects simultaneously, delivering comprehensive responses in seconds rather than minutes.

Financial Services and Trading

Financial markets operate in milliseconds, making latency-sensitive voice AI crucial for trading floors and client services. Continuous Parallel Architecture enables voice systems that can simultaneously monitor market conditions, verify trading authorization, and execute transactions while providing real-time risk analysis.

The architecture’s ability to process multiple data streams simultaneously makes it ideal for complex financial scenarios where decisions depend on rapidly changing market conditions, regulatory requirements, and client preferences.

Logistics and Supply Chain Management

Modern supply chains involve countless moving parts that require real-time coordination. Voice AI systems built on Continuous Parallel Architecture can simultaneously track shipments, optimize routes, and communicate with drivers while monitoring weather conditions and traffic patterns.

When a delivery exception occurs, the system can instantly evaluate multiple resolution options, communicate with relevant stakeholders, and implement solutions — all through natural voice interactions that feel as smooth as speaking with an experienced logistics coordinator.

The Technical Implementation: Building Parallel Processing Systems

Microservices Architecture Foundation

Continuous Parallel Architecture builds on microservices principles, with each AI component operating as an independent service that can scale and update without affecting other system components. This modularity enables the parallel processing that makes continuous operation possible.

Unlike monolithic AI systems where a single failure can bring down the entire platform, distributed architecture ensures that problems remain isolated while healthy components continue operating normally.

Edge Computing Integration

To achieve sub-400ms response times, Continuous Parallel Architecture leverages edge computing to minimize network latency. Processing occurs as close to the end user as possible, with intelligent load balancing distributing computational tasks across available edge nodes.

This distributed approach also improves privacy and security by keeping sensitive data processing local rather than transmitting everything to centralized cloud servers.

API-First Design

The architecture’s API-first approach enables seamless integration with existing enterprise systems. Rather than requiring wholesale replacement of current infrastructure, Continuous Parallel Architecture can enhance existing voice AI implementations through parallel processing layers.

Comparing Architectures: Sequential vs. Parallel Performance

| Metric | Sequential Pipeline | Continuous Parallel Architecture |

|---|---|---|

| Average Response Time | 800-1500ms | <400ms |

| Resource Utilization | 35-50% | 85-95% |

| Failure Recovery Time | 30-60 seconds | <5 seconds |

| Concurrent User Capacity | Baseline | 3x baseline |

| Learning Adaptation Speed | Days to weeks | Real-time |

The Future of Voice AI Architecture

Continuous Parallel Architecture represents more than an incremental improvement — it’s a fundamental shift toward AI systems that can truly understand and respond to human communication in real-time. As enterprise voice AI adoption accelerates, the performance advantages of parallel processing will become essential for competitive differentiation.

Organizations deploying sequential pipeline systems today are building on yesterday’s architecture. The companies that will dominate voice AI tomorrow are those embracing parallel processing now.

The technology challenges ahead — from multi-modal AI integration to real-time personalization at scale — all require the parallel processing capabilities that Continuous Parallel Architecture provides. Sequential systems simply cannot deliver the performance and adaptability that next-generation enterprise applications demand.

Implementation Considerations for Enterprise Adoption

Infrastructure Requirements

Implementing Continuous Parallel Architecture requires robust computational infrastructure capable of supporting multiple concurrent AI models. However, the improved resource utilization often means that parallel systems can deliver superior performance with similar or even reduced hardware requirements compared to inefficient sequential implementations.

Cloud-native deployment options make it possible for enterprises to adopt parallel architecture without significant upfront infrastructure investments, scaling resources dynamically based on actual usage patterns.

Integration Complexity

While the internal architecture is more sophisticated, Continuous Parallel Architecture actually simplifies enterprise integration through its API-first design and modular components. Organizations can implement parallel processing incrementally, starting with high-impact use cases and expanding coverage over time.

The self-healing and adaptive capabilities also reduce ongoing maintenance complexity compared to brittle sequential systems that require constant monitoring and manual intervention.

Measuring Success: KPIs for Parallel Architecture Deployment

Enterprise voice AI success depends on metrics that matter to business outcomes:

User Experience Metrics: Response latency, conversation completion rates, and user satisfaction scores directly correlate with parallel processing efficiency.

Operational Metrics: System uptime, concurrent user capacity, and resource utilization demonstrate the operational advantages of parallel architecture.

Business Impact Metrics: Cost per interaction, agent productivity improvements, and customer retention rates show the bottom-line impact of superior voice AI performance.

Organizations implementing Continuous Parallel Architecture typically see 40-60% improvements across these metrics within the first quarter of deployment.

The Competitive Advantage of Early Adoption

Voice AI is rapidly becoming table stakes for enterprise customer experience. The organizations that deploy Continuous Parallel Architecture first will establish significant competitive advantages in customer satisfaction, operational efficiency, and cost management.

As sequential pipeline limitations become more apparent, enterprises will face a choice: invest in yesterday’s architecture or leap directly to parallel processing systems that can evolve with future requirements.

The window for competitive differentiation through voice AI architecture is open now, but it won’t remain open indefinitely. Market leaders are already recognizing the strategic importance of parallel processing capabilities.

Ready to transform your voice AI with Continuous Parallel Architecture? Book a demo and experience the difference that parallel processing makes for enterprise voice AI performance.