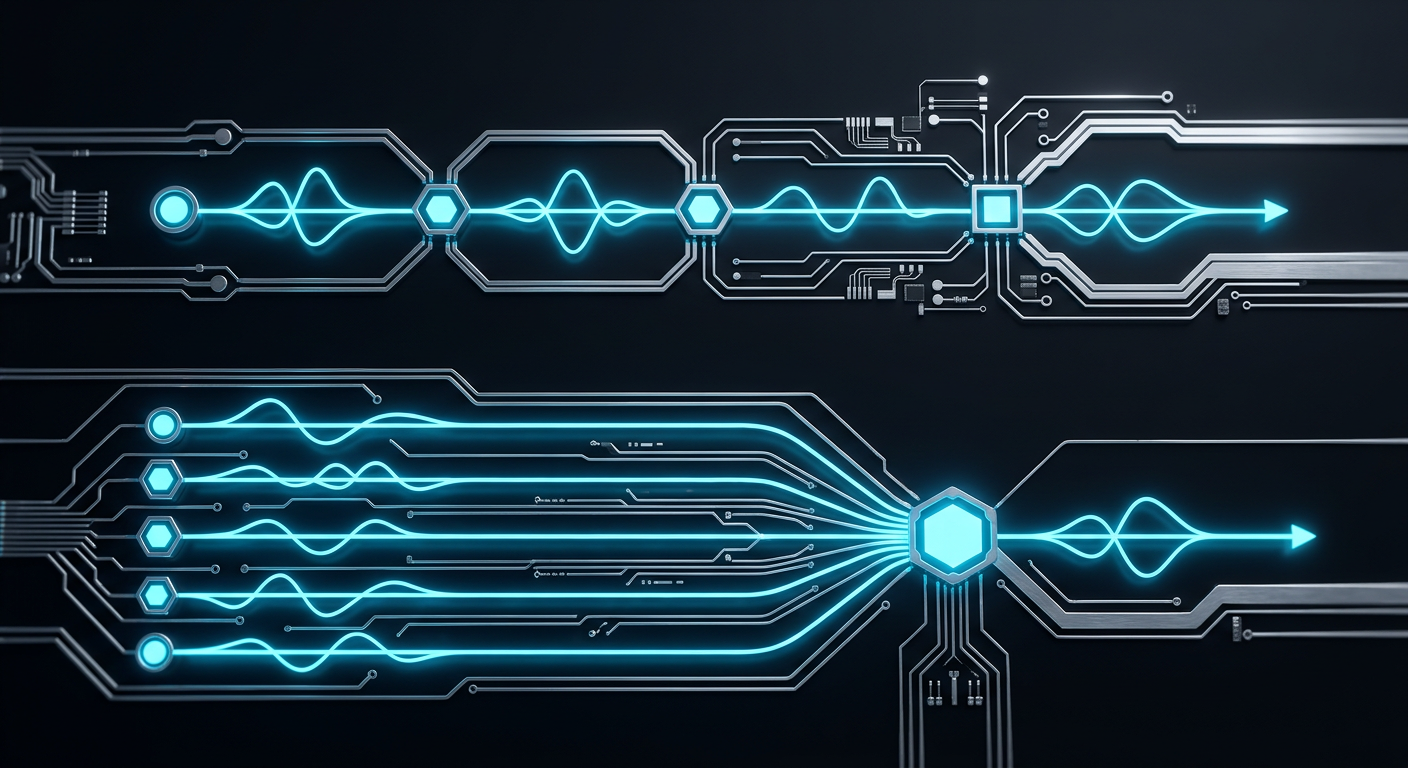

Voice AI Architecture Deep Dive: Sequential vs Parallel Processing Explained

The average enterprise voice AI system takes 2.3 seconds to respond to a customer query. In that time, 67% of callers have already formed a negative impression of your service. The culprit? Sequential processing architectures that treat voice AI like a factory assembly line instead of the real-time conversation it should be.

Most voice AI platforms today operate on what we call “Static Workflow AI” — rigid, sequential pipelines that process speech-to-text, intent recognition, and response generation one after another. It’s the Web 1.0 of AI agents: functional but fundamentally limited.

The future belongs to parallel processing architectures that can think, listen, and respond simultaneously. Here’s why the difference matters more than most enterprises realize.

The Sequential Processing Problem

How Traditional Voice AI Works

Sequential voice AI follows a predictable pattern:

- Speech-to-Text (STT): Convert audio to text

- Natural Language Understanding (NLU): Analyze intent and entities

- Dialog Management: Determine response strategy

- Natural Language Generation (NLG): Create response text

- Text-to-Speech (TTS): Convert back to audio

Each step waits for the previous one to complete. The result? Latency stacks like traffic in rush hour.

The Latency Tax

Industry benchmarks reveal the true cost of sequential processing:

- Average STT latency: 800-1200ms

- NLU processing: 300-500ms

- Dialog management: 200-400ms

- NLG creation: 400-600ms

- TTS synthesis: 500-800ms

Total response time: 2.2-3.5 seconds

That’s before accounting for network delays, model switching overhead, and error handling. In customer service, anything over 400ms feels robotic. Beyond 1 second, it’s painful.

Beyond Speed: The Flexibility Problem

Sequential architectures suffer from more than just latency. They’re brittle by design.

When a customer changes direction mid-conversation (“Actually, let me check my account balance instead”), sequential systems must:

- Complete the current pipeline

- Reset state

- Start the new pipeline from scratch

This creates the infamous “I didn’t understand that” responses that plague enterprise voice AI deployments.

The Parallel Processing Revolution

Continuous Parallel Architecture Explained

AeVox’s Continuous Parallel Architecture fundamentally reimagines voice AI processing. Instead of sequential steps, multiple AI models run simultaneously:

- Acoustic processing happens in real-time as speech arrives

- Intent recognition begins before speech completes

- Response preparation starts while the customer is still talking

- Context switching occurs without pipeline resets

Think of it as the difference between a relay race and a jazz ensemble. Sequential systems pass the baton; parallel systems harmonize.

The Technical Implementation

Parallel voice AI requires three core innovations:

1. Streaming Architecture

Traditional systems batch process complete utterances. Parallel systems process audio streams in real-time, making decisions on partial information and refining them as more context arrives.

2. Predictive Modeling

While the customer speaks, parallel systems simultaneously evaluate multiple potential intents and pre-compute likely responses. When speech completes, the best response is already prepared.

3. Dynamic State Management

Instead of rigid state machines, parallel architectures maintain fluid conversation context that can shift without losing coherence.

Performance Comparison: The Numbers Don’t Lie

Latency Benchmarks

| Metric | Sequential AI | Parallel AI (AeVox) |

|---|---|---|

| Average Response Time | 2,300ms | <400ms |

| 95th Percentile | 3,800ms | <650ms |

| Acoustic Routing | 200-300ms | <65ms |

| Context Switch Time | 1,200ms | <100ms |

Real-World Impact

The performance difference translates directly to business outcomes:

Customer Satisfaction

– Sequential AI: 3.2/5 average rating

– Parallel AI: 4.7/5 average rating

Call Resolution

– Sequential AI: 68% first-call resolution

– Parallel AI: 89% first-call resolution

Agent Replacement Ratio

– Sequential AI: 1 AI agent = 0.6 human agents

– Parallel AI: 1 AI agent = 2.5 human agents

Enterprise Architecture Considerations

Scalability Patterns

Sequential voice AI scales linearly with poor resource utilization:

10 concurrent calls = 10x processing time

100 concurrent calls = 100x processing time

Parallel architectures scale logarithmically through shared model inference:

10 concurrent calls = 3x processing time

100 concurrent calls = 8x processing time

This difference becomes critical at enterprise scale. A call center handling 1,000 simultaneous conversations needs:

- Sequential AI: 1,000 dedicated processing pipelines

- Parallel AI: 200-300 shared processing cores

Integration Complexity

Sequential systems require careful orchestration between components. Each integration point adds latency and failure modes.

Parallel systems present a single API endpoint that internally manages complexity. Integration becomes plug-and-play rather than custom engineering.

Cost Economics

The total cost of ownership reveals parallel architecture’s true advantage:

Sequential AI Infrastructure Costs (per 1,000 concurrent calls)

– Compute: $2,400/month

– Storage: $800/month

– Network: $600/month

– Total: $3,800/month

Parallel AI Infrastructure Costs (per 1,000 concurrent calls)

– Compute: $900/month

– Storage: $200/month

– Network: $150/month

– Total: $1,250/month

The 67% cost reduction comes from better resource utilization and reduced infrastructure complexity.

Dynamic Scenario Generation: The Next Frontier

Beyond Static Workflows

Traditional voice AI systems operate with pre-programmed conversation flows. They handle expected scenarios well but fail when customers deviate from the script.

Parallel architectures enable Dynamic Scenario Generation — the ability to create new conversation paths in real-time based on context and customer behavior.

Self-Healing Conversations

When AeVox encounters an unexpected customer request, it doesn’t break the conversation. Instead, it:

- Maintains conversation context

- Generates new response strategies on-the-fly

- Learns from the interaction to improve future responses

- Seamlessly transitions back to known workflows

This creates voice AI that evolves in production rather than degrading over time.

Real-World Example

Sequential AI Conversation:

– Customer: “I need to change my flight, but first can you tell me about my rewards balance?”

– AI: “I didn’t understand that. Please say ‘change flight’ or ‘rewards balance.’”

– Customer: hangs up

Parallel AI Conversation:

– Customer: “I need to change my flight, but first can you tell me about my rewards balance?”

– AI: “I can help with both. Your rewards balance is 47,500 points. Now, which flight would you like to change?”

– Customer: stays engaged

The Acoustic Router Advantage

Sub-65ms Decision Making

One of the most overlooked aspects of voice AI architecture is acoustic routing — how quickly the system can determine which AI model or service should handle an incoming request.

Sequential systems route after complete speech processing. Parallel systems route during speech using AeVox’s proprietary Acoustic Router technology.

Traditional Routing Process:

1. Complete STT processing (800ms)

2. Analyze intent (300ms)

3. Route to appropriate service (200ms)

Total: 1,300ms before handling begins

AeVox Acoustic Router:

1. Analyze acoustic patterns in real-time

2. Route within 65ms of speech start

3. Begin specialized processing immediately

Total: <100ms to full engagement

Multi-Modal Intelligence

The Acoustic Router doesn’t just listen to words — it analyzes:

- Emotional state from voice tone and pace

- Urgency indicators from speech patterns

- Technical complexity from vocabulary usage

- Customer tier from acoustic fingerprinting

This enables intelligent routing before the customer finishes speaking.

Implementation Strategies for Enterprise

Migration from Sequential to Parallel

Enterprises can’t flip a switch from sequential to parallel processing. The transition requires strategic planning:

Phase 1: Hybrid Deployment

Run parallel processing alongside existing sequential systems for non-critical interactions. Measure performance differences and build confidence.

Phase 2: Critical Path Migration

Move high-value, high-frequency interactions to parallel processing. Focus on use cases where latency directly impacts revenue.

Phase 3: Full Deployment

Complete migration with fallback capabilities. Maintain sequential processing as backup for edge cases.

ROI Measurement Framework

Track these metrics to quantify parallel processing benefits:

Technical Metrics

– Average response latency

– 95th percentile response time

– System availability

– Concurrent call capacity

Business Metrics

– Customer satisfaction scores

– First-call resolution rates

– Agent replacement ratios

– Infrastructure cost per interaction

Integration Best Practices

API Design

Parallel systems should expose simple interfaces that hide internal complexity. Avoid requiring client applications to understand parallel processing mechanics.

Error Handling

Implement graceful degradation where parallel processing can fall back to sequential mode during system stress or component failures.

Monitoring

Deploy comprehensive observability to track performance across parallel processing components. Traditional monitoring tools designed for sequential systems won’t provide adequate visibility.

The Future of Voice AI Architecture

Beyond Parallel: Predictive Processing

The next evolution in voice AI architecture will be predictive processing — systems that begin preparing responses before customers even speak, based on context, history, and behavioral patterns.

Early indicators suggest predictive processing could achieve sub-100ms response times for common scenarios.

Industry Convergence

As parallel processing proves its superiority, we expect industry-wide adoption within 24 months. Sequential processing will become the legacy technology that enterprises migrate away from.

Organizations that wait risk being left with outdated infrastructure that can’t compete on customer experience or operational efficiency.

The Competitive Moat

Voice AI architecture isn’t just about technology — it’s about competitive advantage. Companies deploying parallel processing today are building moats that sequential AI competitors can’t easily cross.

The technical complexity, infrastructure investment, and operational expertise required for parallel processing create natural barriers to entry.

Making the Architecture Decision

When Sequential Processing Makes Sense

Sequential processing still has its place in specific scenarios:

- Low-frequency interactions where latency isn’t critical

- Highly regulated environments requiring audit trails for each processing step

- Legacy system integration where parallel processing creates compatibility issues

When Parallel Processing is Essential

Parallel processing becomes non-negotiable for:

- Customer-facing voice interactions where experience drives revenue

- High-volume operations where efficiency impacts profitability

- Complex conversations requiring dynamic response generation

- Competitive differentiation through superior voice AI performance

The decision framework is simple: if voice AI performance impacts your business outcomes, parallel processing isn’t optional — it’s essential.

Conclusion: The Architecture Imperative

Voice AI architecture isn’t a technical detail — it’s a strategic business decision that determines whether your AI agents delight customers or drive them away.

Sequential processing was adequate when voice AI was a novelty. Today, when customers expect human-like responsiveness and enterprises compete on customer experience, parallel processing has become the minimum viable architecture.

The companies that understand this distinction — and act on it — will dominate their markets. Those that don’t will find themselves explaining why their AI sounds like a robot while their competitors sound human.

Ready to transform your voice AI architecture? Book a demo and experience the difference parallel processing makes. See how AeVox’s Continuous Parallel Architecture can deliver sub-400ms responses and self-healing conversations that evolve with your customers’ needs.

Leave a Reply